Introduction

Backpacking gear is characterized by attributes that are difficult to evaluate before purchase, including reliability under variable environmental conditions, durability over time, and usability under fatigue. This makes gear purchasing a prototypical “experience good” decision in which consumers face information asymmetry and elevated downside risk (Nelson, 1970). Under such conditions, markets tend to reward sellers and platforms that can generate credible trust signals that help consumers distinguish quality and fit. The classical “lemons” problem predicts that when quality is uncertain and credible signals are absent, the market can drift toward adverse selection, reducing overall decision confidence and weakening the informational value of price and reputation alone (Akerlof, 1970).

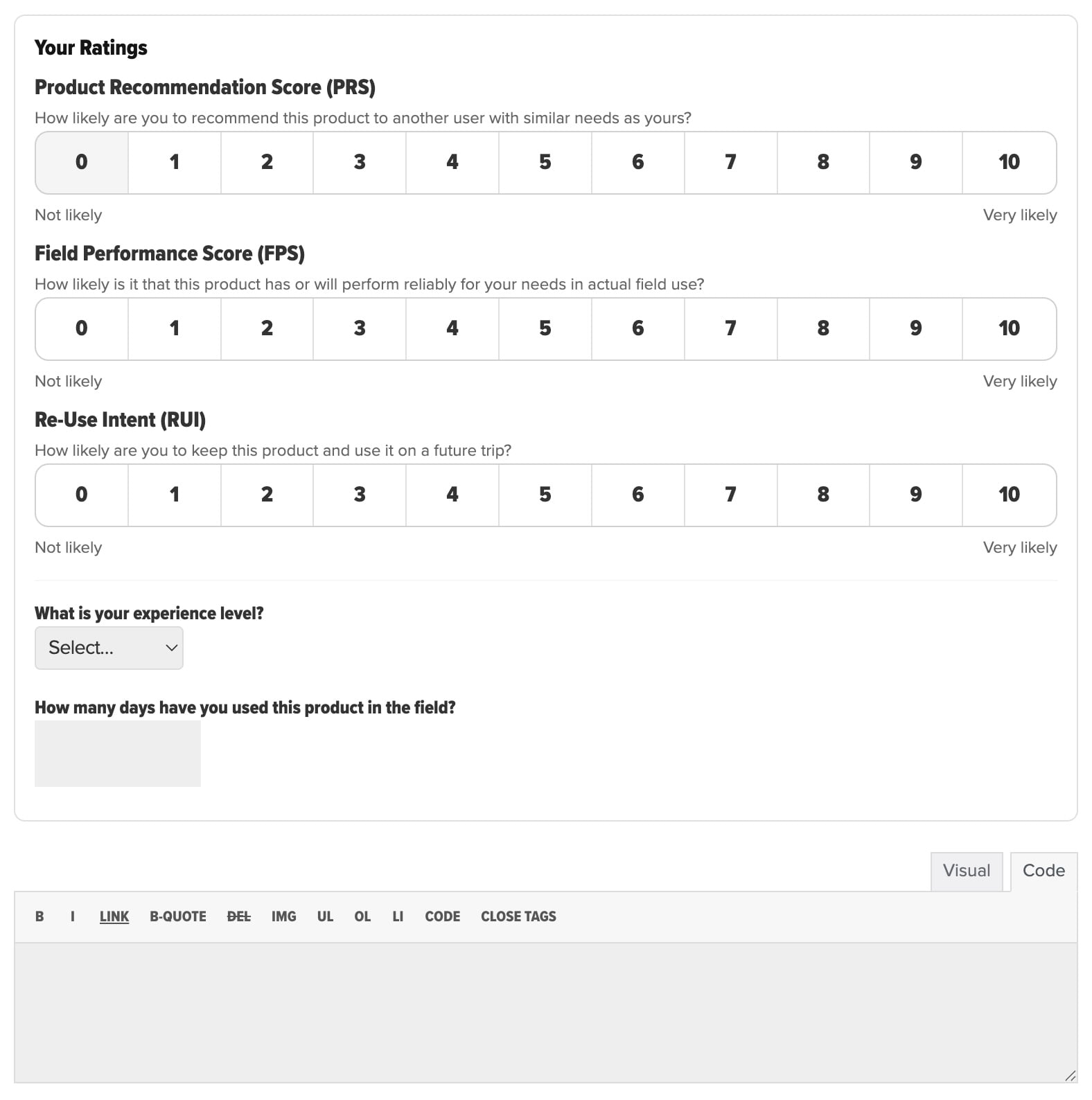

This article makes a case that the Backpacking Light Member Gear Review System is a deliberately structured signaling environment designed to increase perceived diagnosticity, strengthen source credibility cues, and raise the cost of opportunistic manipulation. It accomplishes this through (a) construct separation into three distinct judgment dimensions, (b) consistent 0–10 likelihood scaling with explicit question framing, (c) reviewer credibility metadata that is immediately visible at the point of consumption, and (d) product-level aggregation that preserves construct-level meaning rather than compressing it into an ambiguous single score. These design choices are consistent with established findings in persuasion psychology, information adoption, and the helpfulness of review-type communications (Hovland & Weiss, 1951).

Author’s Note

This article, and the new Backpacking Light Member Gear Review Forum, represent the outcome of our ongoing consumer advocacy research in the areas of online influence in the context of marketing psychology, behavioral science, and online community.

Context and Problem Formulation

Consumers shopping for backcountry gear are rarely searching for “the best” product in a universal sense. They are attempting to reduce uncertainty about whether a product will perform reliably in conditions that can vary widely across climate, terrain, duration, user physiology, and user competence. This is precisely the decision context in which conventional consumer review models perform poorly. A single overall star rating compresses multiple psychological constructs into one number and forces consumers to infer meaning without adequate warrant. It also encourages reviewers to express a global affective evaluation that may be dominated by fit, aesthetics, novelty, or price fairness rather than by field reliability.

Behavioral science predicts that when a signal is ambiguous, individuals rely more heavily on heuristics and priors, such as brand reputation, price, social conformity, and influencer attachment cues. In review environments, this increases susceptibility to norm-based cues that can be manipulated, and it reduces the extent to which consumers can use reviews as diagnostic evidence for their own needs (Filieri, 2015; Sussman & Siegal, 2003). In other words, the risk is not merely that review systems become “noisy.” The deeper risk is that consumers learn that reviews are not dependable, and then substitute weaker proxies for quality – influencer attachment being among the most dominant of modern mechanisms (Jordan, 2025).

The Behavioral Science of Trust Signals in Review Environments

A large portion of review consumption occurs under bounded attention. In these conditions, individuals tend to use source cues and structural cues as trust heuristics. Source credibility research demonstrates that perceived credibility of the communicator can shape the acceptance of information even when the message content is held constant, especially when receivers face uncertainty (Hovland & Weiss, 1951). This is important for gear reviews because the consumer is not only evaluating the product but also evaluating the epistemic reliability of the reviewer.

Dual-process models of persuasion further predict that people alternate between more analytic evaluation and faster heuristic processing depending on involvement, ability, and context (Petty & Cacioppo, 1986). Gear shopping commonly exhibits this pattern. Consumers often scan quickly to determine whether a product is “in the right neighborhood,” and then selectively invest more cognition once a short list forms. A review system optimized for trust must therefore function in both modes. It must provide high-quality heuristic cues at a glance while also preserving the conditions for more analytic interpretation.

Signaling theory provides an additional lens for why some review designs feel credible and others do not. In markets with information asymmetry, signals become believable when they are costly to fake or when they require real investment that low-quality or deceptive actors are unlikely to bear (Spence, 1973). In online review contexts, “cost” does not need to be monetary. It can be effort, specificity, or the provision of metadata that implies real exposure and therefore implies vulnerability to contradiction over time.

Finally, behavioral intention has special relevance to gear decisions. The Theory of Planned Behavior identifies intention as a primary proximal predictor of future behavior across many domains (Ajzen, 1991). In review settings, a measure that captures the reviewer’s intention to keep and reuse a product is not redundant with either satisfaction or reliability judgments. It represents a distinct construct with different implications for consumer decision-making.

Why Conventional Ratings Underperform for Technical Gear

Common rating systems emphasize either a single overall rating or a generalized “likelihood to recommend” measure. While a 0–10 recommendation metric has become widely recognized as a loyalty proxy in customer experience practice (Reichheld, 2003), recommendation alone is psychologically underspecified for technical gear. A reviewer may recommend a product to a certain class of user while personally choosing not to keep it, or may keep a product because it fills a niche role even while recognizing performance limitations. A single score forces the consumer to guess which underlying judgment the rating actually reflects.

Research on review helpfulness reinforces this critique. Work on helpfulness voting and review characteristics indicates that review depth and informational content shape perceived helpfulness, and that the nature of the product moderates what consumers find useful (Mudambi & Schuff, 2010). For experience goods, simplistic extremes and low-context evaluations tend to be less helpful than information that supports mental simulation, comparison, and diagnostic inference. This is especially salient for gear where consumers must imagine performance across scenarios rather than simply predict enjoyment.

A further limitation is ecosystem credibility. Review fraud and strategic manipulation have been documented as economically incentivized behaviors in large-scale review platforms, undermining consumer confidence and encouraging skepticism toward unstructured ratings (Luca & Zervas, 2015). When consumers believe a platform is vulnerable to gaming, they discount even legitimate reviews. This creates a trust collapse in which honest information carries the negative externality of the system’s manipulability.

The Backpacking Light Instrument as Construct-Separated Measurement

The Backpacking Light Member Gear Review System uses three parallel 0–10 likelihood measures, explicitly framed as distinct judgments: Product Recommendation Score, Field Performance Score, and Re-Use Intent. In the review interface, each scale is presented with a clear prompt and consistent anchoring from “Not likely” to “Very likely.” The wording is consequential.

The Product Recommendation Score (PRS) is framed as the likelihood of recommending the product to another user “with similar needs,” which serves as a relevance constraint that addresses a pervasive interpretive problem in consumer ratings: consumers cannot tell whether a low rating reflects poor quality or simply a mismatch. This relevance framing is consistent with evidence that perceived relevance and perceived diagnosticity are central to whether people adopt review information (Filieri, 2015).

The Field Performance Score (FPS) isolates a reliability expectation under “actual field use.” This is structurally important because it prevents a global satisfaction judgment from laundering itself into an implied reliability claim. In technical gear, the psychological and practical cost of failure is often the dominant concern, and a system that explicitly measures reliability expectations will better map to the consumer’s risk model than a generalized satisfaction score.

The Re-Use Intent (RUI) measures the likelihood that the reviewer will keep and use the product on a future trip. From a behavioral science standpoint, this construct is valuable because it is closer to a behavioral commitment than an affective evaluation, and intention is widely treated as a meaningful antecedent to action (Ajzen, 1991). It is also a useful counterweight to novelty effects. A product that feels exciting on first exposure may yield high satisfaction but low reuse intent once the reviewer anticipates tradeoffs that emerge over time.

The system’s construct separation aligns with broader research on how people adopt online opinions. Information adoption models emphasize that people assess usefulness and credibility, often through both central and heuristic routes, and that these assessments mediate whether advice is adopted (Sussman & Siegal, 2003). A three-construct instrument increases the likelihood that at least one dimension maps cleanly onto the consumer’s immediate decision concern and reduces the cognitive burden of inferring what a single “overall” number means.

Credibility and Warranting Cues Embedded in each Individual Review

A defining feature of the Backpacking Light system is that each individual rating is paired with reviewer metadata presented as part of the rating summary, including self-reported experience level and “product days in field.” For example, a reviewer may be labeled “Expert” and report a high number of days of field use with the product, with this metadata displayed alongside the three scores. This design choice operationalizes core mechanisms of credibility and signaling.

Source credibility research suggests that when consumers face uncertainty, credibility cues act as decisive heuristics (Hovland & Weiss, 1951). Experience level serves as an expertise cue, while days in field serve as an exposure cue that functions as a form of warranting. Exposure cues provide evidence of sampling opportunities for failure and performance variation. They also affect how consumers interpret the rating. A Field Performance Score of 8/10 paired with several weeks of field days is a fundamentally different signal than the same score paired with one weekend trip, even if both were honestly reported.

From a signaling theory perspective, requiring and displaying exposure metadata raises the cost of low-effort manipulation and increases the plausibility that the review is grounded in actual use (Spence, 1973). This does not eliminate deception, but it changes the equilibrium incentives. In typical star-rating systems, fake review production can be scaled cheaply because platforms ask for little more than an affective rating. In a system where the review is structurally tied to experience and exposure, deceptive actors must either fabricate additional details that increase inconsistency risk or abstain. The credibility benefit is not merely that the platform appears more serious. It is that the information architecture makes low-cost deception less attractive.

Aggregation Design as Preservation of Meaning Rather than Compression

At the product level, Backpacking Light presents aggregate values for each construct, accompanied by review count. The summary modal reports the number of reviews and displays the three aggregate (average) scores, one per construct. This approach avoids a common failure mode in rating aggregation: collapsing heterogeneous judgments into a single “overall” score that is easy to display but difficult to interpret. When a single number is reported, consumers tend to treat it as a general quality indicator even when it is actually an average across conflicting constructs and mismatched use cases.

By aggregating separately across recommendation, expected field performance, and reuse intent, the system preserves the possibility that a product can be reliable but niche, broadly recommendable but not personally retained, or personally retained despite recognized constraints. That preservation matters because perceived diagnosticity depends on whether information supports discrimination among alternatives for the consumer’s specific needs (Filieri, 2015).

Review volume also has behavioral meaning. When the system displays the number of reviews alongside aggregates, it provides consumers with a basic sampling cue. Consumers routinely use volume cues to infer stability and reduce uncertainty, especially when they cannot inspect raw distributions. This is consistent with the broader literature on online opinion environments in which consumers use both informational cues and normative cues to evaluate products (Cheung et al., 2008; Filieri, 2015).

How the Backpacking Light Design Addresses the Known Weaknesses of Review Ecosystems

The Backpacking Light Member Gear Review System directly counters the most consequential limitations of common models through its measurement structure and its presentation logic. It reduces construct ambiguity by refusing to treat “overall satisfaction” as an adequate unit of analysis for performance-critical gear, replacing it with three distinct, interpretable judgments. It increases credibility by presenting expertise and exposure metadata at the moment the consumer evaluates the review, consistent with established findings on the influence of source credibility (Hovland & Weiss, 1951). It raises the cost of manipulation by requiring information that implies real-world use, which aligns with signaling theory’s account of credibility under asymmetric information (Spence, 1973).

It also aligns with what empirical work suggests consumers actually need from reviews. Studies of review helpfulness indicate that the most useful reviews are those that provide consumers with information that can be applied to decision making, rather than merely expressing valence (Mudambi & Schuff, 2010). When the Backpacking Light system forces reviewers to express judgments across recommendation, performance reliability, and reuse intent, it effectively compels reviewers to provide structured meaning that is inherently more diagnostic than a single overall rating.

Finally, the system is defensible as a response to a compromised review landscape. Evidence indicates that review fraud is not hypothetical and that incentives can produce systematic manipulation in open ecosystems (Luca & Zervas, 2015). The Backpacking Light design does not claim to eliminate manipulation through detection alone. Instead, it reduces manipulation pressure by improving signal quality, increasing effort requirements, and foregrounding credibility cues that allow consumers to discount low-warrant information.

Implications for Consumer Trust and for Backpacking Light’s Differentiation

In behavioral terms, Backpacking Light is not merely collecting ratings and amassing review volume. It is constructing a trust signal that functions under realistic cognitive constraints. The user interface supports heuristic decision-making by presenting three interpretable constructs and credibility cues in a compact summary, which is consistent with dual-process persuasion accounts (Petty & Cacioppo, 1986). At the same time, it supports deeper analytic evaluation by preserving construct-level nuance in both individual reviews and aggregate summaries, increasing perceived diagnosticity and thereby increasing the probability of information adoption (Filieri, 2015; Sussman & Siegal, 2003).

From an economic perspective, the system serves as an institutional response to quality uncertainty. Where Akerlof’s framework predicts market failure in the absence of credible signals, Backpacking Light’s system operates as a credibility-producing mechanism that allows higher-quality information to be distinguished from low-quality noise (Akerlof, 1970). In practical terms, the system improves consumer ability to answer the key question when choosing gear for backcountry pursuits: not “Do people like it?” but “Is it likely to work for someone like me, in real field use, and would an experienced person choose to carry it again?”

References

Akerlof, G. A. (1970). The market for “lemons”: Quality uncertainty and the market mechanism. The Quarterly Journal of Economics, 84(3), 488–500. https://doi.org/10.2307/1879431

Ajzen, I. (1991). The theory of planned behavior. Organizational Behavior and Human Decision Processes, 50(2), 179–211. University of Massachusetts

Cheung, C. M. K., Lee, M. K. O., & Rabjohn, N. (2008). The impact of electronic word-of-mouth: The adoption of online opinions in online customer communities. Internet Research, 18(3), 229–247. https://doi.org/10.1108/10662240810883290

Filieri, R. (2015). What makes online reviews helpful? A diagnosticity-adoption framework to explain informational and normative influences in e-WOM. Journal of Business Research, 68(6), 1261–1270. https://doi.org/10.1016/j.jbusres.2014.11.006

Hovland, C. I., & Weiss, W. (1951). The influence of source credibility on communication effectiveness. Public Opinion Quarterly, 15(4), 635–650. https://doi.org/10.1086/266350

Jordan, R. (2025). Outdoor Gear Journalism: Developing Trust Standards. Backpacking Light, October 27, 2025. Link

Luca, M., & Zervas, G. (2015, May 1). Fake it till you make it: Reputation, competition, and Yelp review fraud (Working paper). Boston University

Mudambi, S. M., & Schuff, D. (2010). What makes a helpful online review? A study of customer reviews on Amazon.com. MIS Quarterly, 34(1), 185–200. MISQ

Nelson, P. (1970). Information and consumer behavior. Journal of Political Economy, 78(2), 311–329. https://doi.org/10.1086/259630

Petty, R. E., & Cacioppo, J. T. (1986). Communication and persuasion: Central and peripheral routes to attitude change. Springer. Google Books

Reichheld, F. F. (2003). The one number you need to grow. Harvard Business Review, 81(12), 46–54. Harvard Business Review

Spence, M. (1973). Job market signaling. The Quarterly Journal of Economics, 87(3), 355–374. https://doi.org/10.2307/1882010

Sussman, S. W., & Siegal, W. S. (2003). Informational influence in organizations: An integrated approach to knowledge adoption. Information Systems Research, 14(1), 47–65. https://doi.org/10.1287/isre.14.1.47.14767

Discussion

Become a member to post in the forums.

Companion forum thread to: Behavioral-Science Foundations of the Backpacking Light Member Gear Review System as a High-Fidelity Trust Signal

Backpacking gear is an experience good: performance and reliability are hard to judge before purchase. This article explains how the Backpacking Light Member Gear Review System creates stronger trust signals by separating recommendation, field performance, and re-use intent, then pairing each review with experience level and days in the field. Product-level aggregates preserve nuance, helping shoppers interpret fit, risk, and credibility.

I’m getting an error message.

There may be a few other variables. For example a person’s size will will effect sleeping pad comfort. When AK speaks of cold, it’s on a whole different level than when I speak of cold. Age can make a difference and so forth. It’s understandable that some folks may be reluctant to provide personal details, but it does help with the reviews.

Sorry that I have to say this but to me it read like the author is trying to defend the BPL review system. It also comes across as book smart, reality, not so much.

The BPL reviews were flooded by staff members and people associated with BPL. If you look at the initial reviews, a majority of them were glowing 9’s and 10’s out of 10. First of all, the reviews were not anonymous. That being said, people who have a differing view may not want to respond to prevent people from hurting their feelings. Forum members (in general) tend to be polite. I know myself; I did not respond to a lot of reviews with my thoughts for that very same reason.

As was pointed out earlier, since the review was based upon that person’s opinion, how does that relate to my own experience? For example, I have slept on the snow in a NeoAir in a 30-degree quilt and have been perfectly fine. My wife would be freezing. How do people evaluate the ratings the same way? One person‘s good may be a 6 while another is an 8.

Finally, good surveys and reviews are one of the most difficult things to craft. There are people who do this professionally and there are courses that are taught on how to get the desired information. In my career, I have worked with people in Human Factors who’s job is to evaluate initial Alpha and Beta products to see if we met the customer demands. This is a huge effort that takes a lot of skill. If BPL want to craft a review, I suggest that they seek out professionals to help in this matter.

While a lot of thought may have gone into this article, IMO is is a swing and a miss. I do not see the credibility of the BPL Gear Reviews. In fact, I see it causing more harm than good. My 2 cents.

The Backpacking Light Member Gear Review System

Product Recommendation Score – 2/10

Field Performance Score – 4/10

Re-Use Intent – 2/10

Jon –

Yes! So, you get it, clearly.

The goal here is to move *towards* an evidence-based execution of a review “environment” that increases trust between reviewer and reader. The claims made in the article are based on a pretty large body of research in behavioral and consumer psychology studying consumer behavior. This field has been well-established for many decades, and the internet has introduced many complex anamolies that we’re all trying to sort out.

The effectiveness of any review system will result from aggregation and user contribution.

You seem like the perfect profile for a review contributor, because you are able to break the barrier of not wanting to hurt anyone’s feelings, and are critical-minded. That’s exactly what we need in modern technical gear reviews – not affection for the gear.

Contributions from users like you will strengthen the system – you have expertise, experience, and an honest lens.

A suggestion for calculating aggregate scores — use medians rather than averages (if you are not already doing so). Medians are not sensitive to outliers, one anomalously high or low score cannot drive the aggregate rating.

Jon – you make some good points. I would add another source of distortion – people tend to become advocates of gear as a way of affirming their own judgment. No one likes to admit “I was a dope for buying this”.

That said, I am not as alarmed by the proliferation of 9’s and 10’s on reviews. I think other graybeards on BPL will support me in saying that backpacking gear is objectively waaay better today than it was decades ago. The design, materials and manufacturing processes employed now range from pretty good to outstanding for almost all products. The market is sufficiently competitive that you can be fairly confident that value corresponds with price. Not always — but that’s one of the things reviews are for.

No review system can be perfect. But ask yourself which you trust more — results of a Google search for “best backpacking gear” or BPL reviews. The question kind of answers itself.

I appreciate the reviews. I find them handy and a starting point for further research. I don’t necessarily take them at face value. I find comparisons much more valuable. It’s important to be a wise consumer and do your diligence. I think most here are here for that reason. Learning how to read reviews is an art that should be learned.

I am going to apologize in advance to the OP reviewer. It is not my intent to denigrate your review. I just want to point out what I consider issues. Below are 2 reviews that caught my eye. I had thoughts, but didn’t respond for obvious reasons.

First, the reviews were done by staff members, and the ratings were extremely high (IMO). Needless to say, staff members tend to have more authority than a lot of participants. This is probably true for new subscribers to the forum.

Second, at the top of the article is a section on where you can purchase these items: Garage Grown Gear for one. This raises concerns about quid pro quo.

The GSI Outdoors Compact Scraper

A Staff/Associated member rated this a 9/10/10. My first thought was “is this a joke?”. This is a site for light weight backpacking where we are aiming to reduce weight by thoughtful selection of gear and value-added pieces. If I need to, I use sticks, sand and stones to scrub out my pots. For tricky dishes, I often use parchment paper as an anti-stick coating.

SilverAnt 0.8L Titanium Water Bottle Review

A Staff/Associated member rated this a 8/9/9. It $87.99 I guess it solves a problem when money is no concern. If you are going to go that route, you would probably be better with thin-walled Stainless steel vessel. I do agree that micro plastics are a big concern. Leading culprits are paint and car tires. How much microplastics do we get from water bottles? Spit balling here, but in the course of a year, it is probably less than driving on the road. There are some plastic bottles that have lower microplastic contributions.

At the end of the day, these products were given a high rating. Followed up by an article about Behavioral-Science Foundations of the Backpacking Light Member Gear Review System as a High-Fidelity Trust Signal. To me there is a big mismatch between what is desired and what has been stated. Will it correct itself over the long run? I doubt it. My 2 cents.

Most common drawbacks with gear reviews online whether backpacking or other:

– reviewing not stating their needs and preferred tradeoffs as part of the review

– reviewers not stating their use cases. Do they move fast and sweat, go long and slow, sleep warm, sleep cold, etc etc

– reviewers not sampling breadth of readily available equipment then allocating 10s. Makes the scale almost meaningless. 10/10 compared to what?

Finally, like Jon, “In my career, I have worked with people in Human Factors who’s job is to evaluate initial Alpha and Beta products” (extensively for years). This is hard work. The absence leads to the last and most often ignored liability of online reviews, lack of meaningful performance targets. For example, love the MVTR tests, but what’s the lower limit for a good MVTR and under what use cases?

These are the norm. Online these then get upvoted when they confirm the bias of the upvoter.

Its a trade off. Of course its polite to not say this stuff. But is it polite or helpful to others to not share helpful improvements?

I agree with much of what Jon posted. I’ve seen some reviews recently that seem totally off-the-wall. But personally, I would be very unlikely to post a review that disagreed with a review posted by the owner of the site or by a staff member – i.e. authority figures. And in general, I would hesitate to post a review that disagreed with the OP of that review, because it would seem like a personal insult, with little upside. I found myself wanting to add a review to the products I liked, and not wanting to contribute to the products that I didn’t appreciate. So in the end, I decided not to contribute. The format of the review threads, with an initial review and responses, is problematic in this regard.

So just based on my own emotional response, I suspect that there are strong biases built into this system. Nobody expects otherwise, to be honest, but I don’t think the system is worthy of a pseudo-scientific meta-analysis.

There will always be flaws. Nothing is perfect. You strive for perfection knowing you’ll never reach it , but if you don’t the results can be catastrophic. As teachers and as students, analyze everything. Realize what works for one person might not work for you and visa versa. If a certain ad sets off a red flag, take that into account. If you see it, realize others do as well. If there’s a better way or if you believe there’s a better product or one that may better serve someone’s needs, bring it up. You can critique or you can improve and make it work. Realize the average consumer searching for reviews is pretty savvy.

maybe have more numbers – size, weight, when did you get it, link to manufacturers web site

you could have a link to where it can be purchased and say that you get a small fee if the product is purchased there – it costs money to run a website and it’s reasonable to have ads to pay for it

I don’t look too much at the number of stars a products was given by the user, but I do look at the negative reviews to determine of that characteristic would be important to me. So, maybe have a section “this is why I would buy this” and “this is why I wouldn’t”. Like, maybe a product wore out early but it was used in an extreme way that wouldn’t apply to me, like mountain climbing or arctic expeditions

or someone could say “this pack is no good because it doesn’t have any pockets”

I don’t want pockets so I would know to ignore the low number of stars for that

It starts at the beginning

IMO, the review results do not match the tone and objective of the main objective. There are a lot of references and discussion about Human Behavioral Science as a Foundation for this format. What many people do is read through the comment sections and see if that aligns with their viewpoint/ perspective. So as I said earlier (IMO) the current process is a swing and a miss. There are better results but just looking up gear reviews and sorting through the wheat and chaff yourself. The above process overstates and underdelivers (show me the data how this method is better than other reviews and give examples).

Believe it or not, a good review system IS ROCKET SCIENCE! it takes a tremendous amount of work , energy and refinement to get it right and trustworthy. That, and you need a large sample size to fill out the space. My 2 cents.

Here is an example of the SilverAnt 0.8L Titanium Water Bottle Review on Amazon. I does not have the hthree tiered rating system of BPL, but BPL will not have the statistical numners to provide a robust viewpoint.

4.1/5 Stars 240 reviews

And here is a review that aligns with my thoughts. The person bought it for show, fancy titanium that he paid a ridiculous price for as a status symbol as he notes there are much cheaper options out there.

The Reddit Ultralight subreddit has a basic review template. It works and no one questions the veracity of the reviews.

I don’t think price is a variable when determining quality or use. Nor do I see it as a status symbol. If I pull my high dollar backpack out of the trunk of my 13 year old Toyota with peeling paint, please don’t I’m being a snob.

The gear reviewed and recommended is usually top price. Comparisons with top performing but much less expensive gear would be a big help. Sometimes that last couple percent costs an arm and a leg. Context is helpful. I’m a gear nerd so I almost always find excellent much lower cost alternatives. But I think high trust high utility reviews wouldn’t leave so much of this as an exercise for the reader.

Etiquette. I feel this is the place to discuss it. I feel a second companion thread is sometimes necessary to prevent thread drift. Does an alternative review of similar products belong in the same thread? Comparisons are valuable. Should they be brought up? If so how?

Absolutely, I think that the threads are set up to accommodate multiple reviews for a given product. But the point I was making in the other thread is that IMO a post that reads like an alternative review should be an actual review from someone who has used the product.

I don’t know the answer. I find some value in the opinions. I think they may lead to thread drift. Maybe I’m doing that here?

Maybe you’re right. People should just post what they want to post, and the readers can sort it out.

I’m not right. If a thread drifts too far away from actual reviews, it will start losing value. If I was researching, I’d maybe read the first post and ignore the rest, then spend my time looking elsewhere.

This is a good discussion and potentially we should fork the conversation to a new thread but I’ll keep it here for the moment.

Here’s my thought: if the thread is reviewing ABC water bottle then posts should be reviews of and questions about ABC water bottles not why users think that water bottles in general are useless/useful or that they don’t like water bottles or that they prefer DEF brand or why they think water bottles are _______.

The thread is for people to review that brand of bottle (and some follow up questions). Sound good?

Also figure most gear sold is tested in “normal” backpacking conditions. It’s not like the typical ultralight backpacker is running from polar bears in the Arctic Winter. I gave pretty much the ma on the Nu-25 headlamp thread, but it pretty much works as advertised.

So think there will to be a base amount of functionality when retailing gear, but always some tradeoffs. Still that’s assumed, so maybe a lower temperature used for quilts and battery operated electronics, user height for shelters (assuming they’ve used the manufacturers guidance in selecting the right shelter for their height). Think there’s some quantitative measurements that can be added for categories of gear.

That said, there’s intangibles (such as liking an elasticized opening for a quilt to keep out drafts even though one bonus of a quilt is freedom to move limbs, though other users may “feel” differently. Some review thoughts will need to be descriptive, anecdotal evidence (liked so much, .. bought another if the original was lost), etc…

Become a member to post in the forums.