Introduction

Backpacking gear is characterized by attributes that are difficult to evaluate before purchase, including reliability under variable environmental conditions, durability over time, and usability under fatigue. This makes gear purchasing a prototypical “experience good” decision in which consumers face information asymmetry and elevated downside risk (Nelson, 1970). Under such conditions, markets tend to reward sellers and platforms that can generate credible trust signals that help consumers distinguish quality and fit. The classical “lemons” problem predicts that when quality is uncertain and credible signals are absent, the market can drift toward adverse selection, reducing overall decision confidence and weakening the informational value of price and reputation alone (Akerlof, 1970).

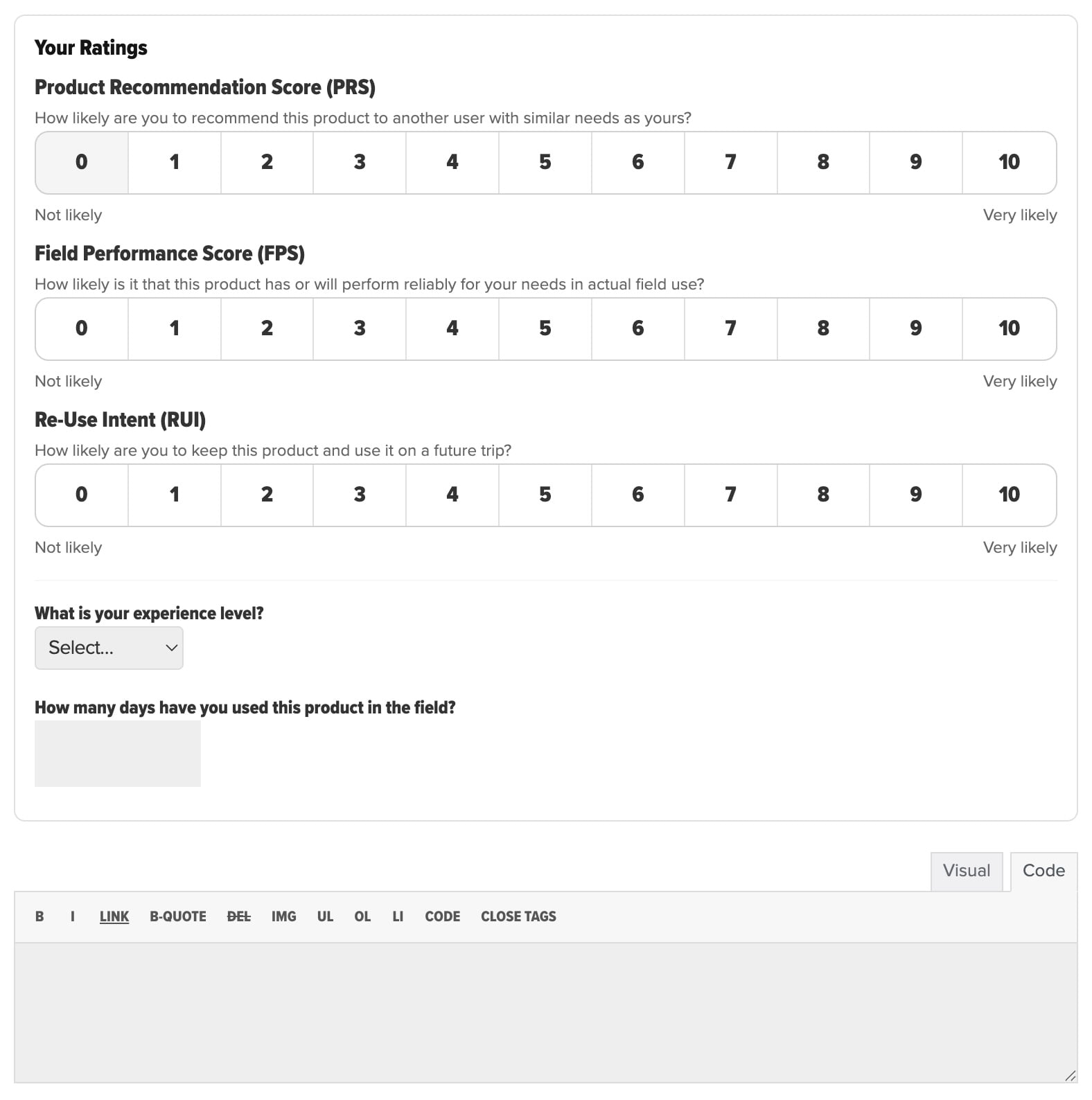

This article makes a case that the Backpacking Light Member Gear Review System is a deliberately structured signaling environment designed to increase perceived diagnosticity, strengthen source credibility cues, and raise the cost of opportunistic manipulation. It accomplishes this through (a) construct separation into three distinct judgment dimensions, (b) consistent 0–10 likelihood scaling with explicit question framing, (c) reviewer credibility metadata that is immediately visible at the point of consumption, and (d) product-level aggregation that preserves construct-level meaning rather than compressing it into an ambiguous single score. These design choices are consistent with established findings in persuasion psychology, information adoption, and the helpfulness of review-type communications (Hovland & Weiss, 1951).

Author’s Note

This article, and the new Backpacking Light Member Gear Review Forum, represent the outcome of our ongoing consumer advocacy research in the areas of online influence in the context of marketing psychology, behavioral science, and online community.

Context and Problem Formulation

Consumers shopping for backcountry gear are rarely searching for “the best” product in a universal sense. They are attempting to reduce uncertainty about whether a product will perform reliably in conditions that can vary widely across climate, terrain, duration, user physiology, and user competence. This is precisely the decision context in which conventional consumer review models perform poorly. A single overall star rating compresses multiple psychological constructs into one number and forces consumers to infer meaning without adequate warrant. It also encourages reviewers to express a global affective evaluation that may be dominated by fit, aesthetics, novelty, or price fairness rather than by field reliability.

Behavioral science predicts that when a signal is ambiguous, individuals rely more heavily on heuristics and priors, such as brand reputation, price, social conformity, and influencer attachment cues. In review environments, this increases susceptibility to norm-based cues that can be manipulated, and it reduces the extent to which consumers can use reviews as diagnostic evidence for their own needs (Filieri, 2015; Sussman & Siegal, 2003). In other words, the risk is not merely that review systems become “noisy.” The deeper risk is that consumers learn that reviews are not dependable, and then substitute weaker proxies for quality – influencer attachment being among the most dominant of modern mechanisms (Jordan, 2025).

The Behavioral Science of Trust Signals in Review Environments

A large portion of review consumption occurs under bounded attention. In these conditions, individuals tend to use source cues and structural cues as trust heuristics. Source credibility research demonstrates that perceived credibility of the communicator can shape the acceptance of information even when the message content is held constant, especially when receivers face uncertainty (Hovland & Weiss, 1951). This is important for gear reviews because the consumer is not only evaluating the product but also evaluating the epistemic reliability of the reviewer.

Dual-process models of persuasion further predict that people alternate between more analytic evaluation and faster heuristic processing depending on involvement, ability, and context (Petty & Cacioppo, 1986). Gear shopping commonly exhibits this pattern. Consumers often scan quickly to determine whether a product is “in the right neighborhood,” and then selectively invest more cognition once a short list forms. A review system optimized for trust must therefore function in both modes. It must provide high-quality heuristic cues at a glance while also preserving the conditions for more analytic interpretation.

Signaling theory provides an additional lens for why some review designs feel credible and others do not. In markets with information asymmetry, signals become believable when they are costly to fake or when they require real investment that low-quality or deceptive actors are unlikely to bear (Spence, 1973). In online review contexts, “cost” does not need to be monetary. It can be effort, specificity, or the provision of metadata that implies real exposure and therefore implies vulnerability to contradiction over time.

Finally, behavioral intention has special relevance to gear decisions. The Theory of Planned Behavior identifies intention as a primary proximal predictor of future behavior across many domains (Ajzen, 1991). In review settings, a measure that captures the reviewer’s intention to keep and reuse a product is not redundant with either satisfaction or reliability judgments. It represents a distinct construct with different implications for consumer decision-making.

Why Conventional Ratings Underperform for Technical Gear

Common rating systems emphasize either a single overall rating or a generalized “likelihood to recommend” measure. While a 0–10 recommendation metric has become widely recognized as a loyalty proxy in customer experience practice (Reichheld, 2003), recommendation alone is psychologically underspecified for technical gear. A reviewer may recommend a product to a certain class of user while personally choosing not to keep it, or may keep a product because it fills a niche role even while recognizing performance limitations. A single score forces the consumer to guess which underlying judgment the rating actually reflects.

Research on review helpfulness reinforces this critique. Work on helpfulness voting and review characteristics indicates that review depth and informational content shape perceived helpfulness, and that the nature of the product moderates what consumers find useful (Mudambi & Schuff, 2010). For experience goods, simplistic extremes and low-context evaluations tend to be less helpful than information that supports mental simulation, comparison, and diagnostic inference. This is especially salient for gear where consumers must imagine performance across scenarios rather than simply predict enjoyment.

A further limitation is ecosystem credibility. Review fraud and strategic manipulation have been documented as economically incentivized behaviors in large-scale review platforms, undermining consumer confidence and encouraging skepticism toward unstructured ratings (Luca & Zervas, 2015). When consumers believe a platform is vulnerable to gaming, they discount even legitimate reviews. This creates a trust collapse in which honest information carries the negative externality of the system’s manipulability.

The Backpacking Light Instrument as Construct-Separated Measurement

The Backpacking Light Member Gear Review System uses three parallel 0–10 likelihood measures, explicitly framed as distinct judgments: Product Recommendation Score, Field Performance Score, and Re-Use Intent. In the review interface, each scale is presented with a clear prompt and consistent anchoring from “Not likely” to “Very likely.” The wording is consequential.

The Product Recommendation Score (PRS) is framed as the likelihood of recommending the product to another user “with similar needs,” which serves as a relevance constraint that addresses a pervasive interpretive problem in consumer ratings: consumers cannot tell whether a low rating reflects poor quality or simply a mismatch. This relevance framing is consistent with evidence that perceived relevance and perceived diagnosticity are central to whether people adopt review information (Filieri, 2015).

The Field Performance Score (FPS) isolates a reliability expectation under “actual field use.” This is structurally important because it prevents a global satisfaction judgment from laundering itself into an implied reliability claim. In technical gear, the psychological and practical cost of failure is often the dominant concern, and a system that explicitly measures reliability expectations will better map to the consumer’s risk model than a generalized satisfaction score.

The Re-Use Intent (RUI) measures the likelihood that the reviewer will keep and use the product on a future trip. From a behavioral science standpoint, this construct is valuable because it is closer to a behavioral commitment than an affective evaluation, and intention is widely treated as a meaningful antecedent to action (Ajzen, 1991). It is also a useful counterweight to novelty effects. A product that feels exciting on first exposure may yield high satisfaction but low reuse intent once the reviewer anticipates tradeoffs that emerge over time.

The system’s construct separation aligns with broader research on how people adopt online opinions. Information adoption models emphasize that people assess usefulness and credibility, often through both central and heuristic routes, and that these assessments mediate whether advice is adopted (Sussman & Siegal, 2003). A three-construct instrument increases the likelihood that at least one dimension maps cleanly onto the consumer’s immediate decision concern and reduces the cognitive burden of inferring what a single “overall” number means.

Credibility and Warranting Cues Embedded in each Individual Review

A defining feature of the Backpacking Light system is that each individual rating is paired with reviewer metadata presented as part of the rating summary, including self-reported experience level and “product days in field.” For example, a reviewer may be labeled “Expert” and report a high number of days of field use with the product, with this metadata displayed alongside the three scores. This design choice operationalizes core mechanisms of credibility and signaling.

Source credibility research suggests that when consumers face uncertainty, credibility cues act as decisive heuristics (Hovland & Weiss, 1951). Experience level serves as an expertise cue, while days in field serve as an exposure cue that functions as a form of warranting. Exposure cues provide evidence of sampling opportunities for failure and performance variation. They also affect how consumers interpret the rating. A Field Performance Score of 8/10 paired with several weeks of field days is a fundamentally different signal than the same score paired with one weekend trip, even if both were honestly reported.

From a signaling theory perspective, requiring and displaying exposure metadata raises the cost of low-effort manipulation and increases the plausibility that the review is grounded in actual use (Spence, 1973). This does not eliminate deception, but it changes the equilibrium incentives. In typical star-rating systems, fake review production can be scaled cheaply because platforms ask for little more than an affective rating. In a system where the review is structurally tied to experience and exposure, deceptive actors must either fabricate additional details that increase inconsistency risk or abstain. The credibility benefit is not merely that the platform appears more serious. It is that the information architecture makes low-cost deception less attractive.

Aggregation Design as Preservation of Meaning Rather than Compression

At the product level, Backpacking Light presents aggregate values for each construct, accompanied by review count. The summary modal reports the number of reviews and displays the three aggregate (average) scores, one per construct. This approach avoids a common failure mode in rating aggregation: collapsing heterogeneous judgments into a single “overall” score that is easy to display but difficult to interpret. When a single number is reported, consumers tend to treat it as a general quality indicator even when it is actually an average across conflicting constructs and mismatched use cases.

By aggregating separately across recommendation, expected field performance, and reuse intent, the system preserves the possibility that a product can be reliable but niche, broadly recommendable but not personally retained, or personally retained despite recognized constraints. That preservation matters because perceived diagnosticity depends on whether information supports discrimination among alternatives for the consumer’s specific needs (Filieri, 2015).

Review volume also has behavioral meaning. When the system displays the number of reviews alongside aggregates, it provides consumers with a basic sampling cue. Consumers routinely use volume cues to infer stability and reduce uncertainty, especially when they cannot inspect raw distributions. This is consistent with the broader literature on online opinion environments in which consumers use both informational cues and normative cues to evaluate products (Cheung et al., 2008; Filieri, 2015).

How the Backpacking Light Design Addresses the Known Weaknesses of Review Ecosystems

The Backpacking Light Member Gear Review System directly counters the most consequential limitations of common models through its measurement structure and its presentation logic. It reduces construct ambiguity by refusing to treat “overall satisfaction” as an adequate unit of analysis for performance-critical gear, replacing it with three distinct, interpretable judgments. It increases credibility by presenting expertise and exposure metadata at the moment the consumer evaluates the review, consistent with established findings on the influence of source credibility (Hovland & Weiss, 1951). It raises the cost of manipulation by requiring information that implies real-world use, which aligns with signaling theory’s account of credibility under asymmetric information (Spence, 1973).

It also aligns with what empirical work suggests consumers actually need from reviews. Studies of review helpfulness indicate that the most useful reviews are those that provide consumers with information that can be applied to decision making, rather than merely expressing valence (Mudambi & Schuff, 2010). When the Backpacking Light system forces reviewers to express judgments across recommendation, performance reliability, and reuse intent, it effectively compels reviewers to provide structured meaning that is inherently more diagnostic than a single overall rating.

Finally, the system is defensible as a response to a compromised review landscape. Evidence indicates that review fraud is not hypothetical and that incentives can produce systematic manipulation in open ecosystems (Luca & Zervas, 2015). The Backpacking Light design does not claim to eliminate manipulation through detection alone. Instead, it reduces manipulation pressure by improving signal quality, increasing effort requirements, and foregrounding credibility cues that allow consumers to discount low-warrant information.

Implications for Consumer Trust and for Backpacking Light’s Differentiation

In behavioral terms, Backpacking Light is not merely collecting ratings and amassing review volume. It is constructing a trust signal that functions under realistic cognitive constraints. The user interface supports heuristic decision-making by presenting three interpretable constructs and credibility cues in a compact summary, which is consistent with dual-process persuasion accounts (Petty & Cacioppo, 1986). At the same time, it supports deeper analytic evaluation by preserving construct-level nuance in both individual reviews and aggregate summaries, increasing perceived diagnosticity and thereby increasing the probability of information adoption (Filieri, 2015; Sussman & Siegal, 2003).

From an economic perspective, the system serves as an institutional response to quality uncertainty. Where Akerlof’s framework predicts market failure in the absence of credible signals, Backpacking Light’s system operates as a credibility-producing mechanism that allows higher-quality information to be distinguished from low-quality noise (Akerlof, 1970). In practical terms, the system improves consumer ability to answer the key question when choosing gear for backcountry pursuits: not “Do people like it?” but “Is it likely to work for someone like me, in real field use, and would an experienced person choose to carry it again?”

References

Akerlof, G. A. (1970). The market for “lemons”: Quality uncertainty and the market mechanism. The Quarterly Journal of Economics, 84(3), 488–500. https://doi.org/10.2307/1879431

Ajzen, I. (1991). The theory of planned behavior. Organizational Behavior and Human Decision Processes, 50(2), 179–211. University of Massachusetts

Cheung, C. M. K., Lee, M. K. O., & Rabjohn, N. (2008). The impact of electronic word-of-mouth: The adoption of online opinions in online customer communities. Internet Research, 18(3), 229–247. https://doi.org/10.1108/10662240810883290

Filieri, R. (2015). What makes online reviews helpful? A diagnosticity-adoption framework to explain informational and normative influences in e-WOM. Journal of Business Research, 68(6), 1261–1270. https://doi.org/10.1016/j.jbusres.2014.11.006

Hovland, C. I., & Weiss, W. (1951). The influence of source credibility on communication effectiveness. Public Opinion Quarterly, 15(4), 635–650. https://doi.org/10.1086/266350

Jordan, R. (2025). Outdoor Gear Journalism: Developing Trust Standards. Backpacking Light, October 27, 2025. Link

Luca, M., & Zervas, G. (2015, May 1). Fake it till you make it: Reputation, competition, and Yelp review fraud (Working paper). Boston University

Mudambi, S. M., & Schuff, D. (2010). What makes a helpful online review? A study of customer reviews on Amazon.com. MIS Quarterly, 34(1), 185–200. MISQ

Nelson, P. (1970). Information and consumer behavior. Journal of Political Economy, 78(2), 311–329. https://doi.org/10.1086/259630

Petty, R. E., & Cacioppo, J. T. (1986). Communication and persuasion: Central and peripheral routes to attitude change. Springer. Google Books

Reichheld, F. F. (2003). The one number you need to grow. Harvard Business Review, 81(12), 46–54. Harvard Business Review

Spence, M. (1973). Job market signaling. The Quarterly Journal of Economics, 87(3), 355–374. https://doi.org/10.2307/1882010

Sussman, S. W., & Siegal, W. S. (2003). Informational influence in organizations: An integrated approach to knowledge adoption. Information Systems Research, 14(1), 47–65. https://doi.org/10.1287/isre.14.1.47.14767

Discussion

Become a member to post in the forums.

Hmmmm, I am interested in buying a Porsche Cayman. There are 4 models to choose from. Do I have to read 4 different reviews? How do I interpret a review on a 1-10 scale if I don’t know what the scale is? Hard to compare a Porsche Cayman if there is not a comparison to a BMW, Maserati or Tesla. Please explain how BPL’s version of a Behavioral-Science Foundations methodology resolves this issue. Anyone? My 2 cents.

I may push back on that a little. Some of the most useful information about your opinion of ABC bottles is what you think about bottles in general. If you’re a bottle-hating bladder fanboy but love this bottle, I’m interested and better informed if I know why you usually hate bottles. If you’re a life-long bottle user but hate this bottle, I know more than if you just rate it a 1 or 2.

Quite often the best reviews I see of a product are where the reviewer hated something for certain reasons that make it likely I’ll love it for the same reasons (or vice versa).

An interesting problem about TRUST.

Should I just trust the reviewer’s opinion without thinking critically about it?

Should I just trust the reviewer’s opinion without hearing what other readers think – be the others experienced or not?

Is there room (in BPL) for contrary opinions?

I do have various Ti water bottles and Ti thermos flasks. They do look very elegant I am sure.

Did I buy them? No – they were usually included with other things sent to me for review.

I did NOT ask for them.

Were they by way of being bribes? Possibly, but a futile attempt!

Do I ever use any of them? No: such bling is too small and too heavy.

(Too expensive to buy too, but that’s irrelevant.)

Difficult, ennit?

Cheers

One trick that has been used is to look at mean and standard deviations of the results. Low sigma implies consensus. High sigma usually indicates disagreement or a different interpretation of the question or a very different world view. My 2 cents.

GSI Outdoors Compact Scraper

Note Sigma on Usability: complete dis-agreement. Probably different world view on this one.

A basic principle of statistics is the more samples you have the more accurate the results. That’s why mainstream gear on websites like Backcountry, where you might have hundreds of reviews on a product, tends to provide a better evaluation.

Wise to always be cynical though. Many companies get employees or fanboys to post puff piece reviews on gear that are far from objective. They just flood sites with glowing praise of an item. YouTubers often can’t be trusted either. Many are shills for gear companies and hardly spend any significant time with the plethora of stuff they review. Similar thing with bloggers. If they have affiliate links on a product beware. Everybody knows they get a small cut off each sale.

I agree with Monty and Roger. My main question is still unanswered. How does BPL’s implemetation of a Behavioral-Science Foundations of the Backpacking Light Member Gear Review System as a High-Fidelity Trust Signal meet it’s objectives of High Fidelity? High Trust? Where are the metric that shows that it is successful? Seems like it is all hat and no cattle. My 2 cents.

Ha!

Monty gives abstract theory in his first paragraph, and then destroys the implementation in the second one.

Both paras are correct of course.

It is the deliberate misuse of statistics which brings my scorn, and the 2nd para shows how it it misused.

Does anyone believe reviews on Backpacker or TripAdvisor or YouTube these days? I do have a bridge for sale, great value, huge bargain . . .

Cheers

You got me Roger, now I know you studied logic too (darnit).

It’s not the number of good reviews I pay attention to but rather the bad ones. A quality piece of gear that holds up and functions well is hardly going to get panned at all.

Well, yes, but sometimes the bad reviews are written by the opposition! It happens.

Cheers

That’s true Roger, the enemy’s fabricated bad reviews are known as online torpedoes.

The thing is, I read a review, then I think for myself. I guess some may be unable to do that? It’s not an end all.

I usually just look at the bad reviews and try to sort out the ones that point out a legit defect or issue (that may or may not be an issue for me) vs the ones that are from people misapplying an item or not understanding the use-case it was designed for.

There are definitely a lot of junk reviews. I don’t like the practice on commercial sites of importing the reviews from the product manufacturer’s website.

That’s what I do.

It’s not that I haven’t done the same thing, but frankly I find all this talk a bit elitist and patronizing.

Hopefully this is not patronizing, but given the current state of LLMs, the formatting of data and the user interface are not nearly as important as the accumulation of large datasets. For example, here is the summary that Gemini provided of reviews for the Nitecore NU25 UL Headlamp on the ultralight subreddit (sorry, the formatting was obviously FUBAR from my cut-and-paste, but hopefully you get the idea).

When I asked how many reviews the summary was based on, the LLM responded that “The summary provided above was not based on a single article, but rather a synthesis of the consensus from approximately 6 to 8 major discussion threads on r/Ultralight spanning the last two years,” and went on to describe the timeline and context of the various threads (launch, long-term use, recent shift to NU20 classic), including 300-400 unique comments and user experiences.

Personally, I have always found the reddit UI clunky and hard to use compared to a conventional Internet forum with organized sub-fora, but maybe that’s just because I haven’t spent enough time there. It does seem like a very active and diverse community.

——————————–

The Nitecore NU25 is arguably the most discussed piece of electronics on r/Ultralight. However, the release of the new NU25 UL (400 Lumens, USB-C) sparked significant controversy because it replaced a beloved “legend” (the original micro-USB version).

Here is a summary of the reviews and sentiment found on the subreddit regarding the Nitecore NU25 UL:

The Verdict in a Nutshell

The general consensus on r/Ultralight is mixed. While users appreciate the move to USB-C, many consider the “UL” version a functional downgrade from the original due to a confusing interface, the loss of specific beloved lighting modes, and an uncomfortable stock strap.

The Good (Pros)

USB-C Charging: This is the primary reason users upgrade. It allows hikers to carry a single cable for their phone, power bank, and headlamp, eliminating the dreaded micro-USB cable.

Brighter Max Output: The jump to 400 lumens is noticeable. Users find the “Mix” mode (Flood + Spot) very effective for finding trail markers or navigating technical terrain in the dark.

Battery Indicator: The 4-dot battery status indicator is seen as an improvement over the blinking button of the previous generation.

Lockout Mode: It effectively prevents the light from turning on in your pack, though some find the button combination to activate it a bit “fiddly.”

The Bad (Cons)

The “Cord” Headband: The stock “UL” dual-cord headband is a frequent complaint.

Comfort: Many users report it digs into the forehead or feels tight, leaving marks.

Tangling: The dual-cord design tangles easily in pockets compared to a simple single-strap band.

Glow-in-the-dark: While marketed as a feature, some find the glowing cord annoying when trying to sleep.

Confusing Interface (UI): This is the #1 complaint. The original had a simple logic; the new one uses two buttons (Power and Mode) with a mix of short presses, long presses, and double clicks that many find non-intuitive, especially when tired or wearing gloves.

Loss of “Moonlight” & High CRI Modes:

Too Bright: The lowest white setting is now 6 lumens. Hardcore ultralighters loved the old 1-lumen mode for reading or camp chores without blinding others/wasting battery.

Color Quality: The new version ditched the “High CRI” (warm, natural light) auxiliary LED that was perfect for reading maps or cooking. The new light is cooler/harsher.

“Medium” Mode Efficiency: The “Medium” setting is now ~60 lumens (vs. the old ~38 lumens). Users argue this is brighter than necessary for walking on a trail and drains the battery faster than the old “sweet spot” setting.

The “NU20 Classic” Plot Twist

In late 2024/2025 threads, a new sentiment emerged. Nitecore released the NU20 Classic, which is effectively the oldbody style but updated with USB-C.

Current Sentiment: Many r/Ultralight members are now recommending the NU20 Classic over the NU25 ULbecause it restores the simpler interface and form factor they originally loved, while finally adding USB-C.

Summary Table

Feature

NU25 UL (New)

NU25 (Old/Original)

r/Ultralight Preference

Charging

USB-C

Micro-USB

New (UL)

Max Brightness

400 Lumens

360 Lumens

New (UL)

Lowest Mode

6 Lumens

1 Lumen

Old (Huge preference here)

Interface

2 Buttons (Complex)

2 Buttons (Simple)

Old

Light Quality

Cool White

High CRI Option

Old

Strap

Dual Shock Cord

Standard Band

Old (often modded)

By “patronizing”, I believe most folks have the common sense to read a review and realize that it’s just one persons opinion. I don’t expect reviews to be perfect. You’re saying these shoes work for me or this bottle works for me, and this is why. Accumulated data sets can be more accurate, yet there’s the problem of exclusion. Lesser known products. Going strictly off of reviews, we’d all be carrying the same equipment, wearing the same clothes, and eating the same food.

Totally agree.

Become a member to post in the forums.